Data science is an interdisciplinary field that mines raw data, analyses it, and comes up with patterns that are used to extract valuable insights from it. Statistics, computer science, machine learning, deep learning, data analysis, data visualization, and various other technologies form the core foundation of data science. Harvard Business Review referred to data scientist as the “Sexiest Job of the 21st Century.” Glassdoor placed it #1 on the 25 Best Jobs in America list.

According to IBM, demand for this role will soar 28 percent by 2020. It should come as no surprise that in the new era of big data and machine learning, data scientists are becoming rock stars. There are broadly 4 categories in which any data science question can be classified:

Ques: What is regularization? Why is it useful?

Regularisation is the process of adding tuning parameter to a model to induce smoothness in order to prevent overfitting. This is most often done by adding a constant multiple to an existing weight vector. This constant is often the L1(Lasso) or L2(ridge). The model predictions should then minimize the loss function calculated on the regularized training set.

Ques: List down the conditions for Overfitting and Underfitting.

Ques: How do you build a random forest model?

A random forest is built up of a number of decision trees. If you split the data into different packages and make a decision tree in each of the different groups of data, the random forest brings all those trees together. Steps to build a random forest model:

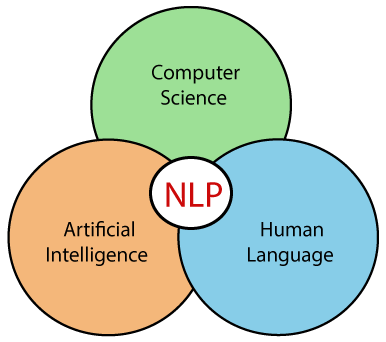

Ques: What is Natural Language Processing? State some real life example of NLP.

Natural Language Processing is a branch of Artificial Intelligence that deals with the conversation of Human Language to Machine Understandable language so that it can be processed by ML models. Examples – NLP has so many practical applications including chatbots, google translate, and many other real time applications like Alexa. Some of the other applications of NLP are in text completion, text suggestions, and sentence correction.

Ques: What is bias-variance trade-off?

Bias: Bias is an error introduced in your model due to oversimplification of the machine learning algorithm. It can lead to underfitting. When you train your model at that time model makes simplified assumptions to make the target function easier to understand. Low bias machine learning algorithms — Decision Trees, k-NN and SVM High bias machine learning algorithms — Linear Regression, Logistic Regression

Variance: Variance is error introduced in your model due to complex machine learning algorithm, your model learns noise also from the training data set and performs badly on test data set. It can lead to high sensitivity and overfitting. Normally, as you increase the complexity of your model, you will see a reduction in error due to lower bias in the model. However, this only happens until a particular point. As you continue to make your model more complex, you end up over-fitting your model and hence your model will start suffering from high variance.

Bias-Variance trade-off: The goal of any supervised machine learning algorithm is to have low bias and low variance to achieve good prediction performance.

There is no escaping the relationship between bias and variance in machine learning. Increasing the bias will decrease the variance. Increasing the variance will decrease bias.

Eight rules of probability

Counting Methods

Factorial Formula: n! = n x (n -1) x (n — 2) x … x 2 x 1

Use when the number of items is equal to the number of places available and the repetition is not allowed .Eg. Find the total number of ways 5 people can sit in 5 empty seats.

= 5 x 4 x 3 x 2 x 1 = 120

Fundamental Counting Principle (multiplication)

This method should be used when repetitions are allowed and the number of ways to fill an open place is not affected by previous fills.

Eg. There are 3 types of breakfasts, 4 types of lunches, and 5 types of desserts. The total number of combinations is = 5 x 4 x 3 = 60

Permutations: P(n,r)= n! / (n−r)!

This method is used when replacements are not allowed and order of item ranking matters.

Eg. A code has 4 digits in a particular order and the digits range from 0 to 9. How many permutations are there if one digit can only be used once?

P(n,r) = 10!/(10–4)! = (10x9x8x7x6x5x4x3x2x1)/(6x5x4x3x2x1) = 5040

Combinations Formula: C(n,r)=(n!)/[(n−r)!r!]

This is used when replacements are not allowed and the order in which items are ranked does not mater.Eg. To win the lottery, you must select the 5 correct numbers in any order from 1 to 52. What is the number of possible combinations?

C(n,r) = 52! / (52–5)!5! = 2,598,960

Ques: Toss the selected coin 10 times from a jar of 1000 coins. Out of 1000 coins, 999 coins are fair and 1 coin is double-headed, assume that you see 10 heads. Estimate the probability of getting a head in the next coin toss.

We know that there are two types of coins - fair and double-headed. Hence, there are two possible ways of choosing a coin. The first is to choose a fair coin and the second is to choose a coin having 2 heads.

P(selecting fair coin) = 999/1000 = 0.999

P(selecting double headed coin) = 1/1000 = 0.001

Using Bayes rule,

P(selecting 10 heads in row) = P(selecting fair coin)* Getting 10 heads + P(selecting double headed coin)

P(selecting 10 heads in row) = P(A)+P(B)

P (A) = 0.999 * (1/2)^10

= 0.999 * (1/1024)

= 0.000976

P (B) = 0.001 * 1 = 0.001

P( A / (A + B) ) = 0.000976 / (0.000976 + 0.001) = 0.4939

P( B / (A + B)) = 0.001 / 0.001976

= 0.5061

P(selecting head in next toss) = P(A/A+B) * 0.5 + P(B/A+B) * 1

= 0.4939 * 0.5 + 0.5061

= 0.7531

Ques: Describe Markov chains?

Markov chain is a type of stochastic process. In Markov chains, the future probability of any state depends only on the current state.

An example can be word recommendation. When we type a paragraph, the next word is suggested by the model which depends only on the previous word and not on anything before it. The Markov chain model is trained previously on a similar paragraph where the next word to a given word is stored for all the words in the training data. Based on this training data output, the next words are suggested.

Ques: If there are 8 marbles of equal weight and 1 marble that weighs a little bit more (for a total of 9 marbles), how many weighings are required to determine which marble is the heaviest?

The list of questions can be really long and is actually never-ending, But with the concepts you've learned from our other posts and this quick question and answers post, I hope you all succeed. ALL THE BEST!